Splines#

Introduction#

Often, the model we want to fit is not a perfect line between some \(x\) and \(y\). Instead, the parameters of the model are expected to vary over \(x\). There are multiple ways to handle this situation, one of which is to fit a spline. Spline fit is effectively a sum of multiple individual curves (piecewise polynomials), each fit to a different section of \(x\), that are tied together at their boundaries, often called knots.

The spline is effectively multiple individual lines, each fit to a different section of \(x\), that are tied together at their boundaries, often called knots.

Below is a full working example of how to fit a spline using PyMC. The data and model are taken from Statistical Rethinking 2e by Richard McElreath’s [McElreath, 2018].

For more information on this method of non-linear modeling, I suggesting beginning with chapter 5 of Bayesian Modeling and Computation in Python [Martin et al., 2021].

from pathlib import Path

import arviz as az

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import pymc as pm

from patsy import dmatrix

%matplotlib inline

%config InlineBackend.figure_format = "retina"

RANDOM_SEED = 8927

az.style.use("arviz-darkgrid")

Cherry blossom data#

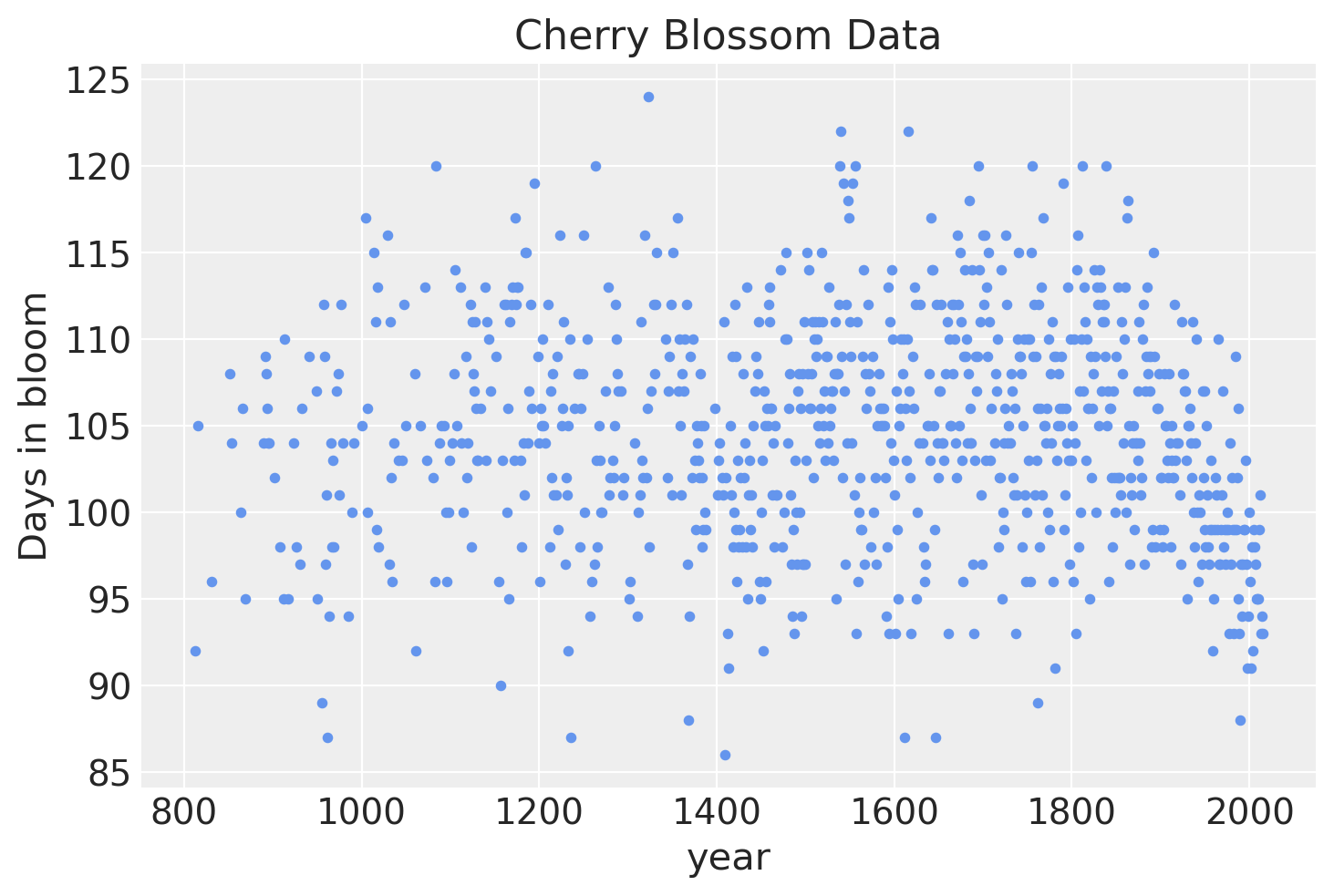

The data for this example is the number of days (doy for “days of year”) that the cherry trees were in bloom in each year (year).

For convenience, years missing a doy were dropped (which is a bad idea to deal with missing data in general!).

try:

blossom_data = pd.read_csv(Path("..", "data", "cherry_blossoms.csv"), sep=";")

except FileNotFoundError:

blossom_data = pd.read_csv(pm.get_data("cherry_blossoms.csv"), sep=";")

blossom_data.dropna().describe()

| year | doy | temp | temp_upper | temp_lower | |

|---|---|---|---|---|---|

| count | 787.000000 | 787.00000 | 787.000000 | 787.000000 | 787.000000 |

| mean | 1533.395172 | 104.92122 | 6.100356 | 6.937560 | 5.263545 |

| std | 291.122597 | 6.25773 | 0.683410 | 0.811986 | 0.762194 |

| min | 851.000000 | 86.00000 | 4.690000 | 5.450000 | 2.610000 |

| 25% | 1318.000000 | 101.00000 | 5.625000 | 6.380000 | 4.770000 |

| 50% | 1563.000000 | 105.00000 | 6.060000 | 6.800000 | 5.250000 |

| 75% | 1778.500000 | 109.00000 | 6.460000 | 7.375000 | 5.650000 |

| max | 1980.000000 | 124.00000 | 8.300000 | 12.100000 | 7.740000 |

blossom_data = blossom_data.dropna(subset=["doy"]).reset_index(drop=True)

blossom_data.head(n=10)

| year | doy | temp | temp_upper | temp_lower | |

|---|---|---|---|---|---|

| 0 | 812 | 92.0 | NaN | NaN | NaN |

| 1 | 815 | 105.0 | NaN | NaN | NaN |

| 2 | 831 | 96.0 | NaN | NaN | NaN |

| 3 | 851 | 108.0 | 7.38 | 12.10 | 2.66 |

| 4 | 853 | 104.0 | NaN | NaN | NaN |

| 5 | 864 | 100.0 | 6.42 | 8.69 | 4.14 |

| 6 | 866 | 106.0 | 6.44 | 8.11 | 4.77 |

| 7 | 869 | 95.0 | NaN | NaN | NaN |

| 8 | 889 | 104.0 | 6.83 | 8.48 | 5.19 |

| 9 | 891 | 109.0 | 6.98 | 8.96 | 5.00 |

After dropping rows with missing data, there are 827 years with the numbers of days in which the trees were in bloom.

blossom_data.shape

(827, 5)

If we visualize the data, it is clear that there a lot of annual variation, but some evidence for a non-linear trend in bloom days over time.

The model#

We will fit the following model.

\(D \sim \mathcal{N}(\mu, \sigma)\)

\(\quad \mu = a + Bw\)

\(\qquad a \sim \mathcal{N}(100, 10)\)

\(\qquad w \sim \mathcal{N}(0, 10)\)

\(\quad \sigma \sim \text{Exp}(1)\)

The number of days of bloom \(D\) will be modeled as a normal distribution with mean \(\mu\) and standard deviation \(\sigma\). In turn, the mean will be a linear model composed of a y-intercept \(a\) and spline defined by the basis \(B\) multiplied by the model parameter \(w\) with a variable for each region of the basis. Both have relatively weak normal priors.

Prepare the spline#

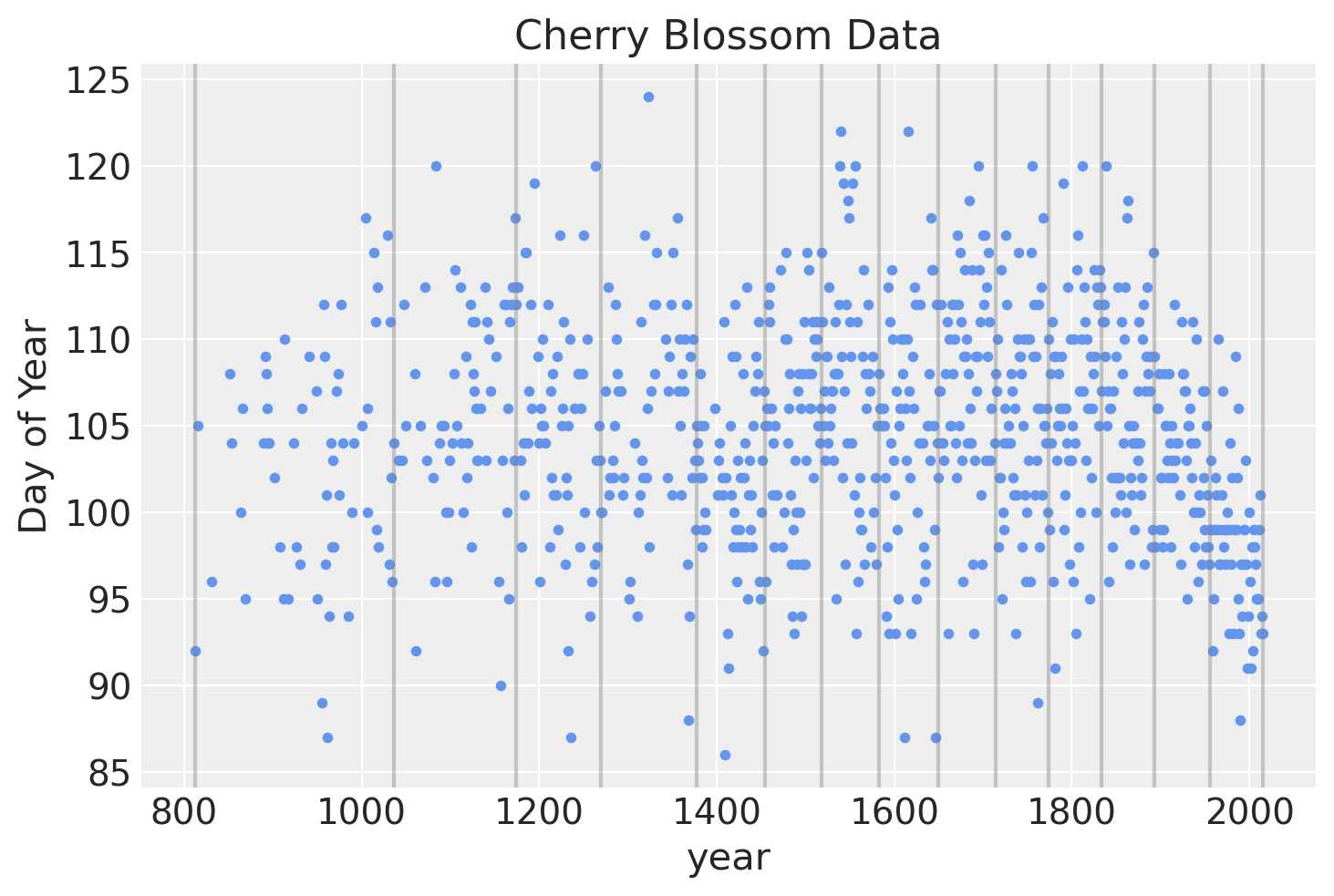

The spline will have 15 knots, splitting the year into 16 sections (including the regions covering the years before and after those in which we have data). The knots are the boundaries of the spline, the name owing to how the individual lines will be tied together at these boundaries to make a continuous and smooth curve. The knots will be unevenly spaced over the years such that each region will have the same proportion of data.

num_knots = 15

knot_list = np.quantile(blossom_data.year, np.linspace(0, 1, num_knots))

knot_list

array([ 812., 1036., 1174., 1269., 1377., 1454., 1518., 1583., 1650.,

1714., 1774., 1833., 1893., 1956., 2015.])

Below is a plot of the locations of the knots over the data.

blossom_data.plot.scatter(

"year", "doy", color="cornflowerblue", s=10, title="Cherry Blossom Data", ylabel="Day of Year"

)

for knot in knot_list:

plt.gca().axvline(knot, color="grey", alpha=0.4);

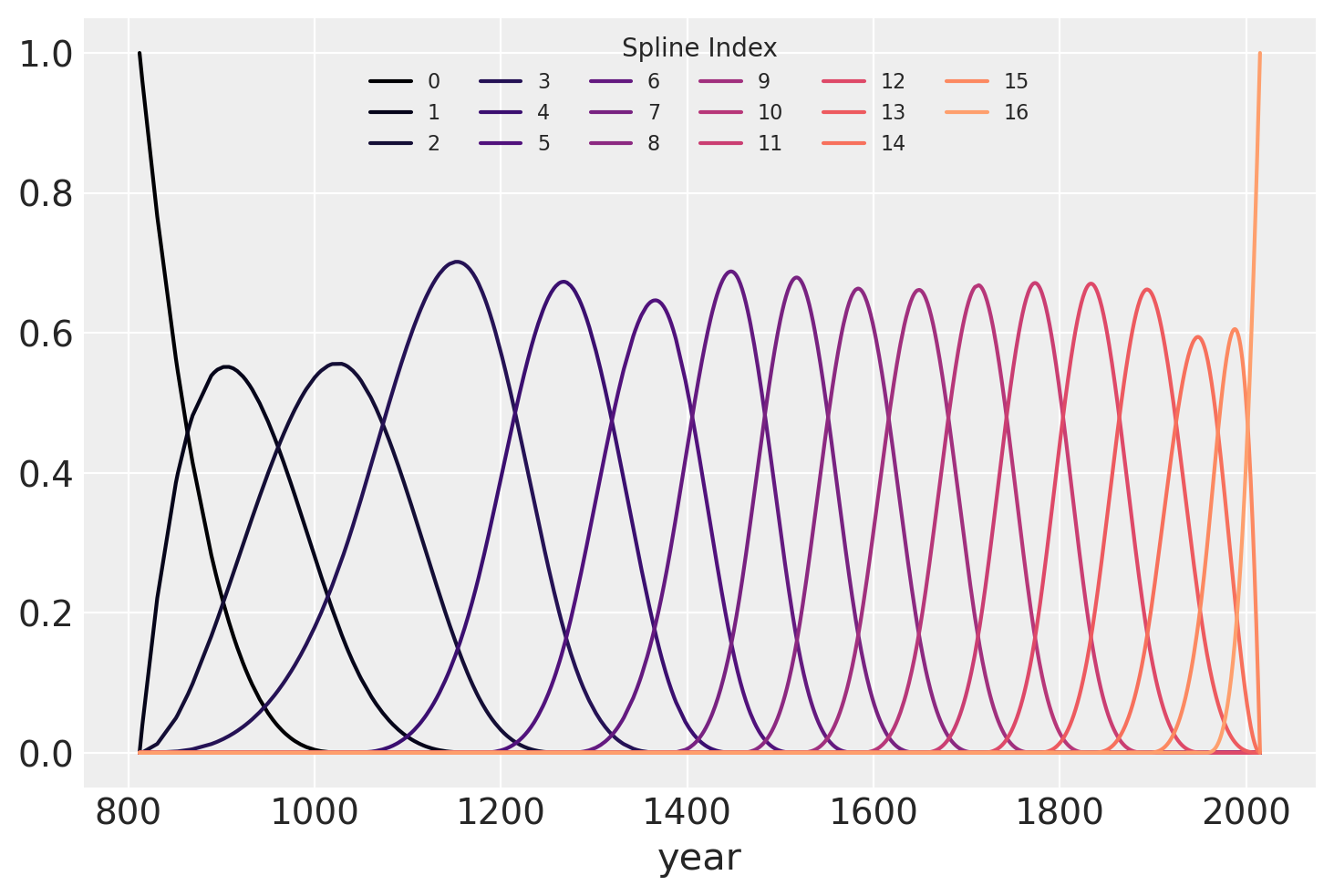

We can use patsy to create the matrix \(B\) that will be the b-spline basis for the regression.

The degree is set to 3 to create a cubic b-spline.

B = dmatrix(

"bs(year, knots=knots, degree=3, include_intercept=True) - 1",

{"year": blossom_data.year.values, "knots": knot_list[1:-1]},

)

B

Show code cell output

DesignMatrix with shape (827, 17)

Columns:

['bs(year, knots=knots, degree=3, include_intercept=True)[0]',

'bs(year, knots=knots, degree=3, include_intercept=True)[1]',

'bs(year, knots=knots, degree=3, include_intercept=True)[2]',

'bs(year, knots=knots, degree=3, include_intercept=True)[3]',

'bs(year, knots=knots, degree=3, include_intercept=True)[4]',

'bs(year, knots=knots, degree=3, include_intercept=True)[5]',

'bs(year, knots=knots, degree=3, include_intercept=True)[6]',

'bs(year, knots=knots, degree=3, include_intercept=True)[7]',

'bs(year, knots=knots, degree=3, include_intercept=True)[8]',

'bs(year, knots=knots, degree=3, include_intercept=True)[9]',

'bs(year, knots=knots, degree=3, include_intercept=True)[10]',

'bs(year, knots=knots, degree=3, include_intercept=True)[11]',

'bs(year, knots=knots, degree=3, include_intercept=True)[12]',

'bs(year, knots=knots, degree=3, include_intercept=True)[13]',

'bs(year, knots=knots, degree=3, include_intercept=True)[14]',

'bs(year, knots=knots, degree=3, include_intercept=True)[15]',

'bs(year, knots=knots, degree=3, include_intercept=True)[16]']

Terms:

'bs(year, knots=knots, degree=3, include_intercept=True)' (columns 0:17)

(to view full data, use np.asarray(this_obj))

The b-spline basis is plotted below, showing the domain of each piece of the spline. The height of each curve indicates how influential the corresponding model covariate (one per spline region) will be on model’s inference of that region. The overlapping regions represent the knots, showing how the smooth transition from one region to the next is formed.

spline_df = (

pd.DataFrame(B)

.assign(year=blossom_data.year.values)

.melt("year", var_name="spline_i", value_name="value")

)

color = plt.cm.magma(np.linspace(0, 0.80, len(spline_df.spline_i.unique())))

fig = plt.figure()

for i, c in enumerate(color):

subset = spline_df.query(f"spline_i == {i}")

subset.plot("year", "value", c=c, ax=plt.gca(), label=i)

plt.legend(title="Spline Index", loc="upper center", fontsize=8, ncol=6);

Fit the model#

Finally, the model can be built using PyMC. A graphical diagram shows the organization of the model parameters (note that this requires the installation of python-graphviz, which I recommend doing in a conda virtual environment).

COORDS = {"splines": np.arange(B.shape[1])}

with pm.Model(coords=COORDS) as spline_model:

a = pm.Normal("a", 100, 5)

w = pm.Normal("w", mu=0, sigma=3, size=B.shape[1], dims="splines")

mu = pm.Deterministic("mu", a + pm.math.dot(np.asarray(B, order="F"), w.T))

sigma = pm.Exponential("sigma", 1)

D = pm.Normal("D", mu=mu, sigma=sigma, observed=blossom_data.doy, dims="obs")

with spline_model:

idata = pm.sample_prior_predictive()

idata.extend(pm.sample(draws=1000, tune=1000, random_seed=RANDOM_SEED, chains=4))

pm.sample_posterior_predictive(idata, extend_inferencedata=True)

Auto-assigning NUTS sampler...

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [a, w, sigma]

Sampling 4 chains for 1_000 tune and 1_000 draw iterations (4_000 + 4_000 draws total) took 42 seconds.

Analysis#

Now we can analyze the draws from the posterior of the model.

Parameter Estimates#

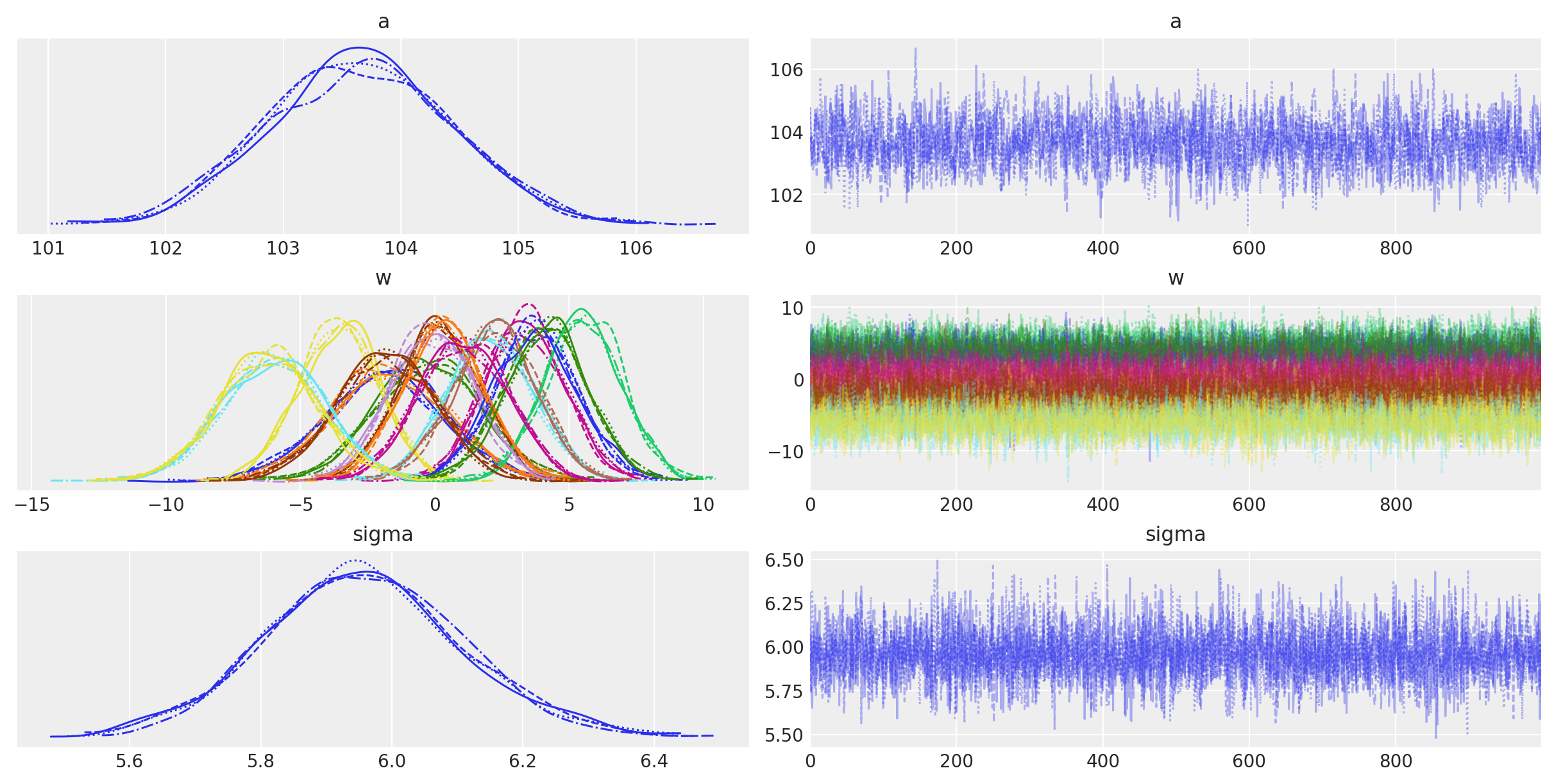

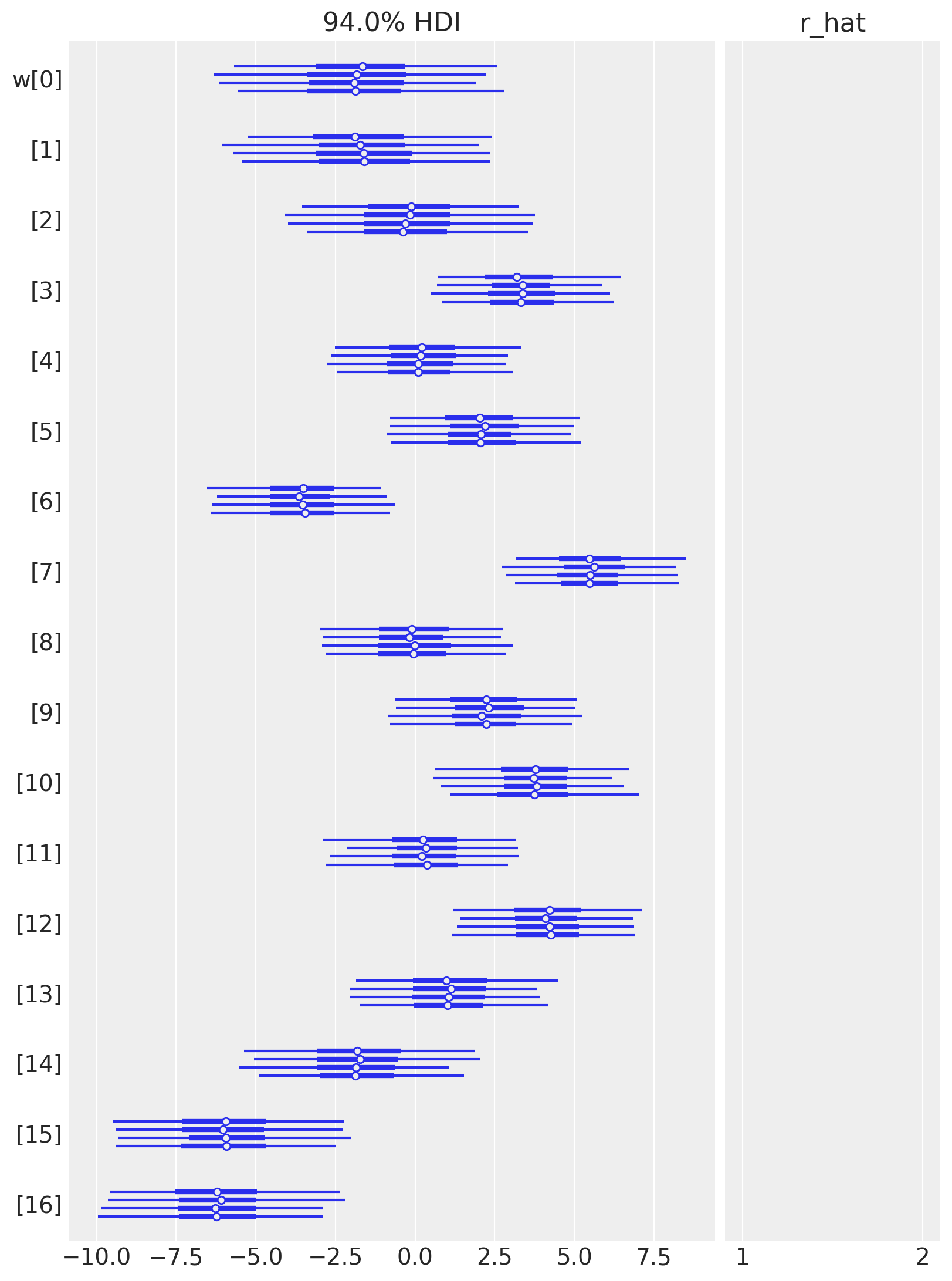

Below is a table summarizing the posterior distributions of the model parameters. The posteriors of \(a\) and \(\sigma\) are quite narrow while those for \(w\) are wider. This is likely because all of the data points are used to estimate \(a\) and \(\sigma\) whereas only a subset are used for each value of \(w\). (It could be interesting to model these hierarchically allowing for the sharing of information and adding regularization across the spline.) The effective sample size and \(\widehat{R}\) values all look good, indicating that the model has converged and sampled well from the posterior distribution.

az.summary(idata, var_names=["a", "w", "sigma"])

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| a | 103.655 | 0.773 | 102.178 | 105.033 | 0.018 | 0.013 | 1776.0 | 2343.0 | 1.0 |

| w[0] | -1.831 | 2.226 | -6.329 | 2.114 | 0.032 | 0.029 | 4970.0 | 3248.0 | 1.0 |

| w[1] | -1.670 | 2.131 | -5.707 | 2.235 | 0.036 | 0.029 | 3572.0 | 3260.0 | 1.0 |

| w[2] | -0.240 | 1.969 | -3.938 | 3.422 | 0.031 | 0.030 | 3970.0 | 3110.0 | 1.0 |

| w[3] | 3.331 | 1.477 | 0.725 | 6.269 | 0.030 | 0.021 | 2518.0 | 2967.0 | 1.0 |

| w[4] | 0.179 | 1.523 | -2.613 | 3.089 | 0.026 | 0.022 | 3357.0 | 3132.0 | 1.0 |

| w[5] | 2.087 | 1.590 | -0.783 | 5.122 | 0.028 | 0.020 | 3234.0 | 2885.0 | 1.0 |

| w[6] | -3.565 | 1.486 | -6.415 | -0.830 | 0.027 | 0.019 | 3056.0 | 2990.0 | 1.0 |

| w[7] | 5.514 | 1.441 | 2.920 | 8.277 | 0.026 | 0.018 | 3069.0 | 3006.0 | 1.0 |

| w[8] | -0.079 | 1.551 | -2.841 | 2.948 | 0.029 | 0.021 | 2905.0 | 3231.0 | 1.0 |

| w[9] | 2.222 | 1.560 | -0.840 | 5.002 | 0.027 | 0.019 | 3469.0 | 3096.0 | 1.0 |

| w[10] | 3.760 | 1.557 | 1.005 | 6.935 | 0.026 | 0.020 | 3481.0 | 2781.0 | 1.0 |

| w[11] | 0.309 | 1.546 | -2.512 | 3.250 | 0.029 | 0.024 | 2808.0 | 2825.0 | 1.0 |

| w[12] | 4.161 | 1.529 | 1.238 | 6.909 | 0.026 | 0.019 | 3383.0 | 3178.0 | 1.0 |

| w[13] | 1.069 | 1.640 | -2.001 | 4.084 | 0.030 | 0.022 | 2957.0 | 3066.0 | 1.0 |

| w[14] | -1.823 | 1.831 | -5.448 | 1.488 | 0.030 | 0.025 | 3770.0 | 2924.0 | 1.0 |

| w[15] | -5.984 | 1.916 | -9.308 | -2.129 | 0.034 | 0.024 | 3239.0 | 3005.0 | 1.0 |

| w[16] | -6.183 | 1.891 | -9.679 | -2.482 | 0.029 | 0.021 | 4211.0 | 2983.0 | 1.0 |

| sigma | 5.958 | 0.150 | 5.663 | 6.239 | 0.002 | 0.001 | 5461.0 | 2769.0 | 1.0 |

The trace plots of the model parameters look good (homogeneous and no sign of trend), further indicating that the chains converged and mixed.

az.plot_trace(idata, var_names=["a", "w", "sigma"]);

az.plot_forest(idata, var_names=["w"], combined=False, r_hat=True);

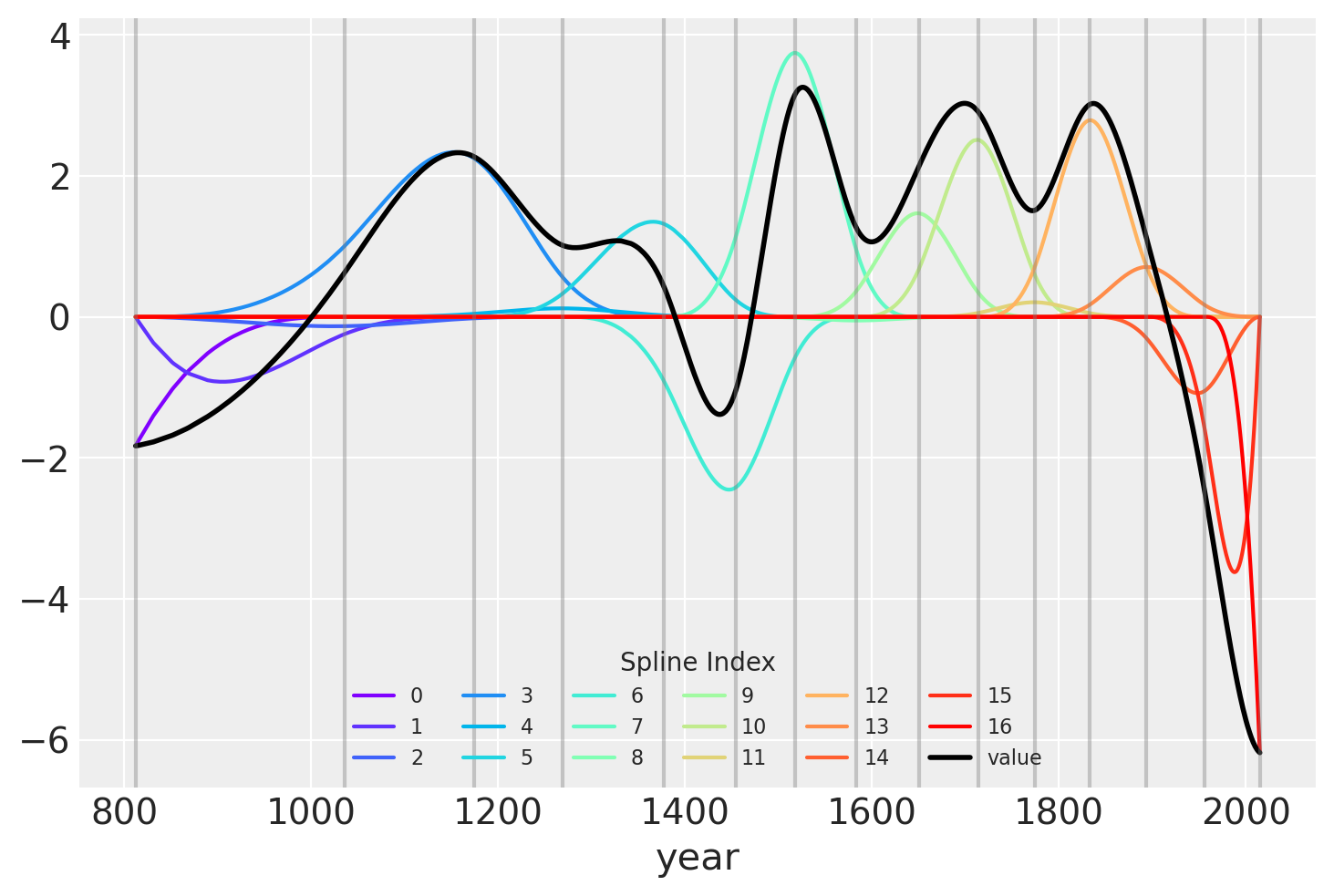

Another visualization of the fit spline values is to plot them multiplied against the basis matrix. The knot boundaries are shown as vertical lines again, but now the spline basis is multiplied against the values of \(w\) (represented as the rainbow-colored curves). The dot product of \(B\) and \(w\) – the actual computation in the linear model – is shown in black.

wp = idata.posterior["w"].mean(("chain", "draw")).values

spline_df = (

pd.DataFrame(B * wp.T)

.assign(year=blossom_data.year.values)

.melt("year", var_name="spline_i", value_name="value")

)

spline_df_merged = (

pd.DataFrame(np.dot(B, wp.T))

.assign(year=blossom_data.year.values)

.melt("year", var_name="spline_i", value_name="value")

)

color = plt.cm.rainbow(np.linspace(0, 1, len(spline_df.spline_i.unique())))

fig = plt.figure()

for i, c in enumerate(color):

subset = spline_df.query(f"spline_i == {i}")

subset.plot("year", "value", c=c, ax=plt.gca(), label=i)

spline_df_merged.plot("year", "value", c="black", lw=2, ax=plt.gca())

plt.legend(title="Spline Index", loc="lower center", fontsize=8, ncol=6)

for knot in knot_list:

plt.gca().axvline(knot, color="grey", alpha=0.4);

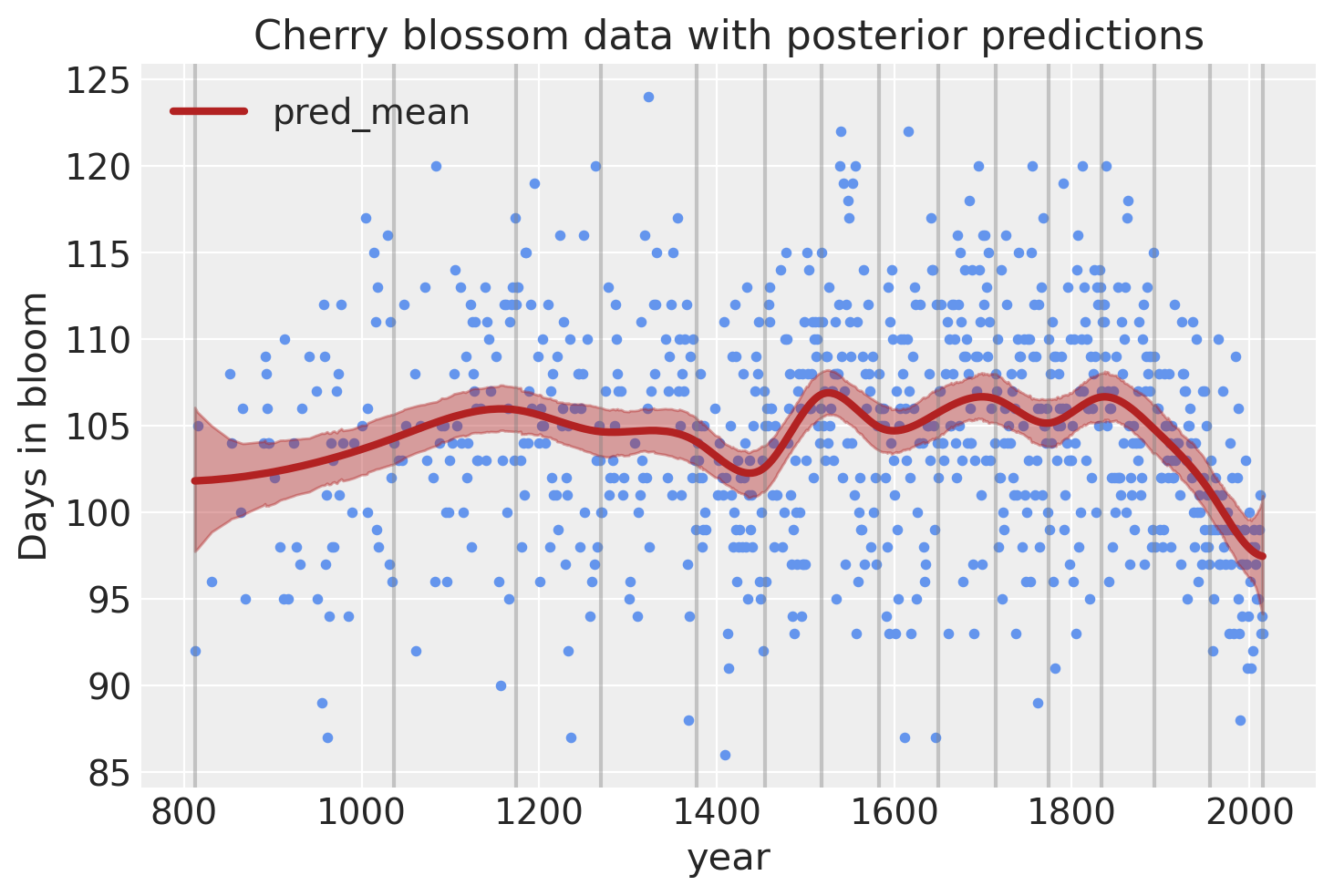

Model predictions#

Lastly, we can visualize the predictions of the model using the posterior predictive check.

post_pred = az.summary(idata, var_names=["mu"]).reset_index(drop=True)

blossom_data_post = blossom_data.copy().reset_index(drop=True)

blossom_data_post["pred_mean"] = post_pred["mean"]

blossom_data_post["pred_hdi_lower"] = post_pred["hdi_3%"]

blossom_data_post["pred_hdi_upper"] = post_pred["hdi_97%"]

blossom_data.plot.scatter(

"year",

"doy",

color="cornflowerblue",

s=10,

title="Cherry blossom data with posterior predictions",

ylabel="Days in bloom",

)

for knot in knot_list:

plt.gca().axvline(knot, color="grey", alpha=0.4)

blossom_data_post.plot("year", "pred_mean", ax=plt.gca(), lw=3, color="firebrick")

plt.fill_between(

blossom_data_post.year,

blossom_data_post.pred_hdi_lower,

blossom_data_post.pred_hdi_upper,

color="firebrick",

alpha=0.4,

);

References#

Osvaldo A Martin, Ravin Kumar, and Junpeng Lao. Bayesian Modeling and Computation in Python. Chapman and Hall/CRC, 2021. doi:10.1201/9781003019169.

Richard McElreath. Statistical rethinking: A Bayesian course with examples in R and Stan. Chapman and Hall/CRC, 2018.

Watermark#

%load_ext watermark

%watermark -n -u -v -iv -w -p pytensor,xarray,patsy

Last updated: Sat Jul 23 2022

Python implementation: CPython

Python version : 3.10.5

IPython version : 8.4.0

pytensor: 2.7.5

xarray: 2022.3.0

patsy : 0.5.2

pymc : 4.1.2

matplotlib: 3.5.2

numpy : 1.23.0

arviz : 0.12.1

pandas : 1.4.3

Watermark: 2.3.1

License notice#

All the notebooks in this example gallery are provided under the MIT License which allows modification, and redistribution for any use provided the copyright and license notices are preserved.

Citing PyMC examples#

To cite this notebook, use the DOI provided by Zenodo for the pymc-examples repository.

Important

Many notebooks are adapted from other sources: blogs, books… In such cases you should cite the original source as well.

Also remember to cite the relevant libraries used by your code.

Here is an citation template in bibtex:

@incollection{citekey,

author = "<notebook authors, see above>",

title = "<notebook title>",

editor = "PyMC Team",

booktitle = "PyMC examples",

doi = "10.5281/zenodo.5654871"

}

which once rendered could look like: